Why the world should not adopt China’s QR-code system

November 25, 2020

With an unexpected suggestion at the G20 summit this weekend, China pushed for worldwide adoption of its “COVID QR-code system” in the fight against Corona. We call on world leaders to be extremely vigilant.

By Catelijne Muller and Virginia Dignum

A while ago, we spearheaded an awareness campaign around the use of Corona-apps. In a letter to the Dutch Government over 180 scientists and experts expressed their many concerns over these apps which lead to a broad and fierce discussion in the media and the Dutch Parliament and severe scrutiny of the app development process. Compared to the Chinese QR-code system, the Corona-apps are child’s play though.

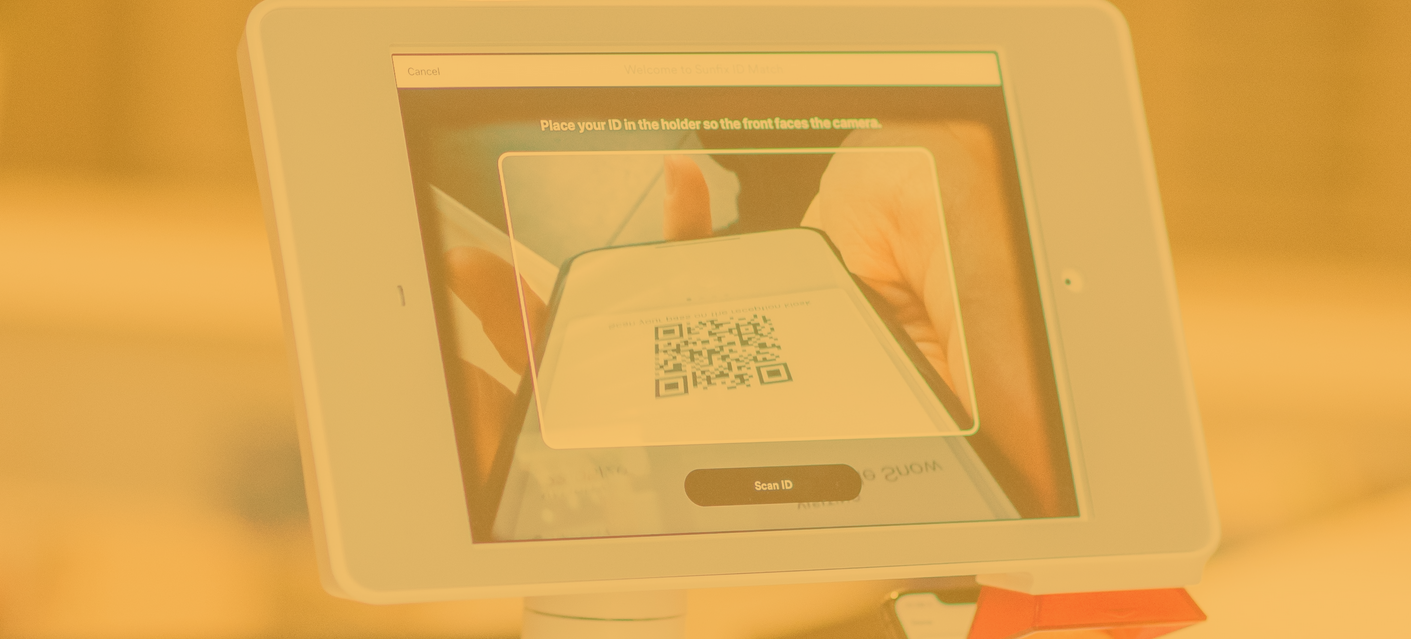

The Chinese COVID QR-code system is basically a health risk warning system that renders a green, yellow or red QR code for each citizen on their smartphone. The colors represent the level of ‘Corona risk’ the citizen poses at any moment. Broadly speaking, green means a person poses no risk, yellow means that a person has potentially been exposed to the Corona virus over the past 14 days and red means a person is infected or has a high chance of being infected with the virus. In many cities in China citizens are asked for their QR-code before entering public transportation, public buildings, malls, airports, etc.

These kinds of applications are the reason that we started the Responsible AI & Corona project. Under normal circumstances the idea of color coding an entire population based on their health risk would be categorically rejected. In fact, the High Level Expert Group on AI in its Ethics Guidelines for Trustworthy AI already classified these types of applications as raising ‘critical concerns’ because ‘(…) Any form of citizen scoring can lead to the loss of […] autonomy and endanger the principle of non-discrimination’.

Make no mistake, this is citizen scoring, and if it weren’t for this crisis, China would think twice before pushing it on the world stage. In times of crisis, we tend to be more receptive to these kinds of ‘invasive’ technologies. We even tend to believe that the technology will save us. We ‘turn a blind eye’ to fundamental rights, ethical values and even effectiveness. The motto is often: no harm, no foul. Many also believe that an invasive technologies such as these, will be dismantled after the crisis. Unfortunately, past crises have shown that once a technology is there, it doesn’t go away easily. Especially not when it provides authorities with increased powers to monitor their citizens.

Why do we need to be vigilant with this “QR-code system”?

In itself this QR code hardly seems an invasive or complex technology. However, it is not the QR code system itself that is worrying, but rather its potential to monitor and score citizens. The ethical, legal and societal impact of this system are paramount.

Technical robustness

First things first though, how does the system work? According to the New York Times, that reported on the Chinese system in March of this year, it is unclear how the system classifies someone with a red, yellow or green QR-code at any given time. The color changes independently, but users do not know what makes it change from green to yellow or red and back. It is also unclear whether there are redress options in case of erroneous classification. The consequences of of a color change can be quite harsh though, because it determines a person’s freedom to move about, enter shops, travel, etc. The article does reveal that the creators of the systems say it uses big data to determine whether a person is at risk of being contagious. We assume that this is a continuous process that triggers the color changes.

What does this mean exactly? AI techniques such as machine learning and deep learning are likely being used here to sift through large amounts of data and ‘determine’ if someone gets a green, yellow or red QR-code. First of all, these techniques do not ‘determine’ anything with absolute certainty. They merely find ‘patterns’ in data. These patterns can be logical, but often prove to be completely irrelevant or even wrong. It should also be noted that the ‘decisions’ these techniques provide are categorizations, not based on personal merits but on characteristics that a person happens to share with others. These systems do not make decisions. They make predictions.

Data-driven prediction systems have proliferated over the past couple of years. There is AI predicting movie and music preference, but also recidivism, credit scores, crimes, social benefits fraud, voter preference, sexual orientation, depression and so on. We have also seen the negative impact these systems can have because of their brittleness. Examples of bias, discrimination even, are plentiful. They are black boxes, because they cannot explain why a certain outcome was reached. It is often unclear what data is being used and prioritized. It ranges from personal data to behavioral data (think of social media activities, our location, our online searches), and from credit data to health data (from apps, smartwatches, etc.). How inferences from this data are drawn is unknown. Predictive systems have been causing serious societal and personal harm.

So how is this relevant for the COVID QR-code system? Given that it apparently uses big data to predict a person’s health risk level, technically the system faces exactly the same challenges. The system does not determine the health risk level, it only makes a prediction. The prediction is likely to be predominantly based on characteristics that someone happens to share with others. These characteristics could be irrelevant or even wrong making these predictions biased or incorrect.

Ethical impact

Apart from the critical concerns raised over this type of ‘citizen scoring’, the High Level Expert Group on AI also determined an ethical value that is particularly relevant in this case: ‘Respect for human dignity’. The group explains that “(…) In this [AI, red.] context respect for human dignity entails that all people are treated with respect due to them as moral subjects, rather than merely as objects to be sifted, sorted, scored, herded, conditioned or manipulated.” Sifting, sorting and scoring is exactly what this QR-system system does, while at the same time potentially herding, conditioning or manipulating people into certain types of behavior. Plus there is the continuous monitoring and surveillance opportunities the system could provide.

Human rights and red lines

In her report for the Council of Europe’s Ad Hoc Committee on AI (CAHAI): “The impact of AI on Human Rights, Democracy and the Rule of Law”, Catelijne Muller describes the broad impact that AI can have on virtually all human rights. She even proposes drawing red lines for “certain AI-systems or uses that are considered to be too impactful to be left uncontrolled or unregulated or to even be allowed.” According to Muller, red lines should be drawn for “(…) AI-enabled social scoring, (…) profiling and scoring through biometric and behaviour recognition (…) and AI-powered mass surveillance. She suggests a ban or a moratorium for these applications or, at the very least, strict conditions for exceptional use.” So also from a human rights perspective, the QR-code system is a ‘red line’.

GDPR

In the EU, the GDPR also raises some barriers to the use of the system, albeit only to some extent. Fully automated decision making and profiling are restricted as far as they use personal data. The QR-code system is a form of automated decision making, but it is unclear whether it is fully automated or if it retains a ‘human-in-the-loop’. It is also unclear, but likely, that the profiling is (or can be) done predominantly with non-personal data. This leaves concerns as to the level of GDPR protection as it could mean that a QR-code system in some form might be allowed in the EU.

Question zero

When it comes to these kinds of extremely invasive AI-systems, we have always stressed the need to pause for reflection and find the appropriate answer to what we call ‘question zero’: Should we use or allow this particular AI-system to be used? Or are we mesmerized by techno-solutionism? Digital technology can contribute to solving grand problems, but it seldom is the solution to a certain problem. Often times, a far less invasive solution is right in front of our eyes.

Fortunately, the European Commission appears to be of the opinion that citizen scoring has no place in the EU. Should global leaders, because of the dire circumstances of this crisis, however decide to consider using a COVID QR-code-like system in some form or shape we strongly advise them to pause for reflection and find that answer to ‘question zero’. We urge them to see the risks it will bring to our societies. We challenge them to imagine the world it will shape. A COVID QR-code system will set a precedent for a future that was unthinkable even 6 months ago. A future that will be very hard to turn back from. This is the AI-Rubicon.

This op-ed is part of the Responsible AI & Corona Project